y[n] = Σx[k]h[n-k] = x[0]h[n] + x[1]h[n-1] + x[2]h[n-2]

= δ[n+1] + 4δ[n] + 8δ[n-1] + 8δ[n-2] + 3δ[n-3]

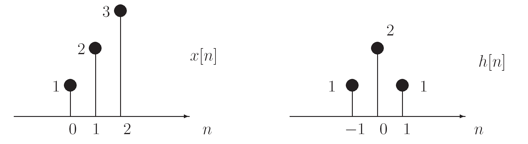

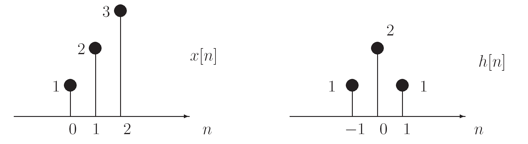

Determine the output y[n] for a systems with the input x[n] and unit-sample response h[n] shown below. Assume h[n]=0 and x[n]=0 for any times n not shown.

y[n] = Σx[k]h[n-k] = x[0]h[n] + x[1]h[n-1] + x[2]h[n-2]

= δ[n+1] + 4δ[n] + 8δ[n-1] + 8δ[n-2] + 3δ[n-3]

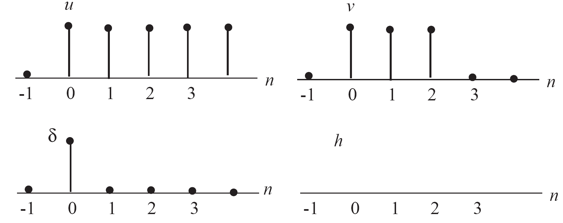

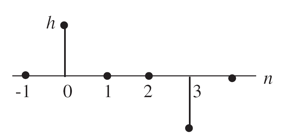

The system is causal, so h[n]=0 for n<0.

The system is causal, so h[n]=0 for n<0.

v[n] = Σu[k]h[n-k] = h[n] + h[n-1] + h[n-2] + ...

h[n] = v[n] - h[n-1] - h[n-2] - ...

h[0] = v[0] - h[-1] - ... = v[0] = 1

h[1] = v[1] - h[0] - h[-1] - ... = v[1] - h[0] = 1 - 1 = 0

h[2] = v[2] - h[1] - h[0] = 1 - 1 - 0 = 0

h[3] = v[3] - h[2] - h[1] - h[0] = 0 - 0 - 0 - 1 = -1

h[4] = v[4] - h[3] - h[2] - h[1] - h[0] = 0 - (-1) - 0 - 0 - 1 = 0

h[n] = 0, n ≥ 4

A signal is transmitted at 20 samples/bit and 8 bits/symbol.

def receive(samples, samples_per_bit, bits_per_symbol): index = samples_per_bit*3/4 return samples[index::samples_per_bit]

The following figure show plots of several received waveforms. The transmitter is sending sequences of binary symbols (i.e., either 0 or 1) at some fixed symbol rate, using 0V to represent 0 and 1V to represent 1.The horizontal grid spacing is 1 microsecond (1e-6 sec).

Answer the following questions for each plot:

Ben Bitdiddle is doing a 6.02 lab on understanding the effect of noise on data receptions, and is confused about the following questions. Please help him by answering them.

In these questions, assume that:

The channel has non-zero random noise, but unless stated otherwise, assume that the noise has 0 mean and that it is a Gaussian with finite variance. The noise affects the received samples in an additive manner, as in the labs you've done.

The noise is a function of Φ[(vth - (signal_level + noise_mean))/noise_sigma] multiplied as appropriate by alpha or beta. So the bit error rate clearly depends on the signal level, the mean and variance of the noise and the digitization threshold.

The number of samples per bit doesn't enter directly into the bit error calculation, but more samples per bit gives each transition more time to reach its final value, reducing inter-symbol interference. This means that the eye will be more open. In the presence of noise, a wider eye means a lower bit error rate.

The magnitude of the BER is, of course, a function of the noise variance, but for a given noise variance, if alpha = beta, the minimum BER is achieved by setting the digitization threshold at 0.5. So (e) is false.

As we saw in PSet #3, when alpha ≠ beta, the noise is minimized when the digitization threshold moves away from the more probable signal. Suppose alpha > beta. The digitization threshold would increase so P_01 would get smaller and P_10 larger. So (c) is not true and (d) is true.

BER = 0.5*(1 - Φ[.5/σ]) + 0.5*Φ[-.5/σ]

= Φ[-.5/σ]

Doubling the noise variance is the same as multiplying σ by sqrt(2), so the resulting BER would be

BERnew = Φ[-.5/(sqrt(2)*σ)]

The change in the bit error rate is given by BERnew - BER.

Problem .

If a transmitter can generate signals between 0V and 5V, how many digital signaling levels can we have if the noise limit is ±.2V and the receiver requires a .1V forbidden zone for each thresholding operation that it needs to implement? Our digital signaling convention requires a ±.2V region around each signaling level and the regions must be separated by a .1V forbidden zone. That means we must have .2+.1+.2 = .5V between signaling levels. With a 5V signaling range, we can get (5/.5)+1=11 digital signaling levels.

Problem .

In this problem, we will understand the impact of imperfect clocks and clock drift on clock recovery in communication systems. A transmitter and receiver are communicating using a perfect communication channel (that is, ignore the effects of ISI and noise for now). The transmitter transmits every bit using symbols of duration 10 samples each. The receiver also tries to sample the channel at the same frequency as the transmitter to obtain 10 samples for each symbol, and then digitizes the 10th sample of the symbol to recover the transmitted bit. However, the non-ideal clocks at the transmitter and receiver "drift away" from each other, as a result of which the receiver ends up sampling the channel 10 times in only 9 sample periods of the transmitter. To cope with this clock drift, the receiver resets its sample counter on transitions from 0 to 1 or vice versa. What is the maximum number of contiguous 0s or 1s that the data can have (i.e., the maximum number of bits without transitions in between) before one starts to see bit errors due to the clock drift? One should have no more than 9 consecutive bits without a transition in order not to see bit errors. Bit #10 at receiver = Sample #100 at receiver = Sample #90 at sender = last sample of bit #9 at transmitter => bit error.

Problem .

Consider the problem of synchronization to identify the start of a message between a transmitter and receiver. Suppose the designers of the communication system decide to use the sequence of bits '01111110' to signal the start of a message. What property should the designers enforce on the data to ensure correct synchronization? Care should be taken to ensure that the synchronization pattern does not appear in the data. For example, one can get around this problem encoding the data in such a way that the synchronization pattern does not appear in the data stream (e.g., 8b/10b).

Problem .

The output of a particular communication channel is given by

y[n] = αx[n] + βx[n-1] where α > β

It's easy to verify both properities given the channel response above, so the channel is linear.

To be time invariant the channel must have the property that if we shift the input by some number of samples s, the output also shifts by s samples. Again that property is easily verified given the channel response above, so the channel is time invariant.

Using the channel response given above, the channel's unit-sample reponse can be computed as

h[0]=α, h[1]=β, h[n]=0 for all other values of n

x[n] = [1, 0, 0, 1, 1, 0, 1, 1], followed by all 1's.

then what is the channel's output assuming α=.7 and

β=.3?

Convolving x[n] with h[n] we get

y[n] = [.7, .3, 0, .7, 1, .3, .7, 1], followed by all 1's.

y[n] = [.7, 1, 1, .3, .7, 1, .3, 0], followed by all 0's.

w[n] = (1/h[0])(y[n] - h[1]w[n-1]) = y[n]/.7 - (.3/.7)w[n-1]

so

w[n] = [1, 1, 1, 0, 1, 1, 0, 0], followed by all 0's

Problem .

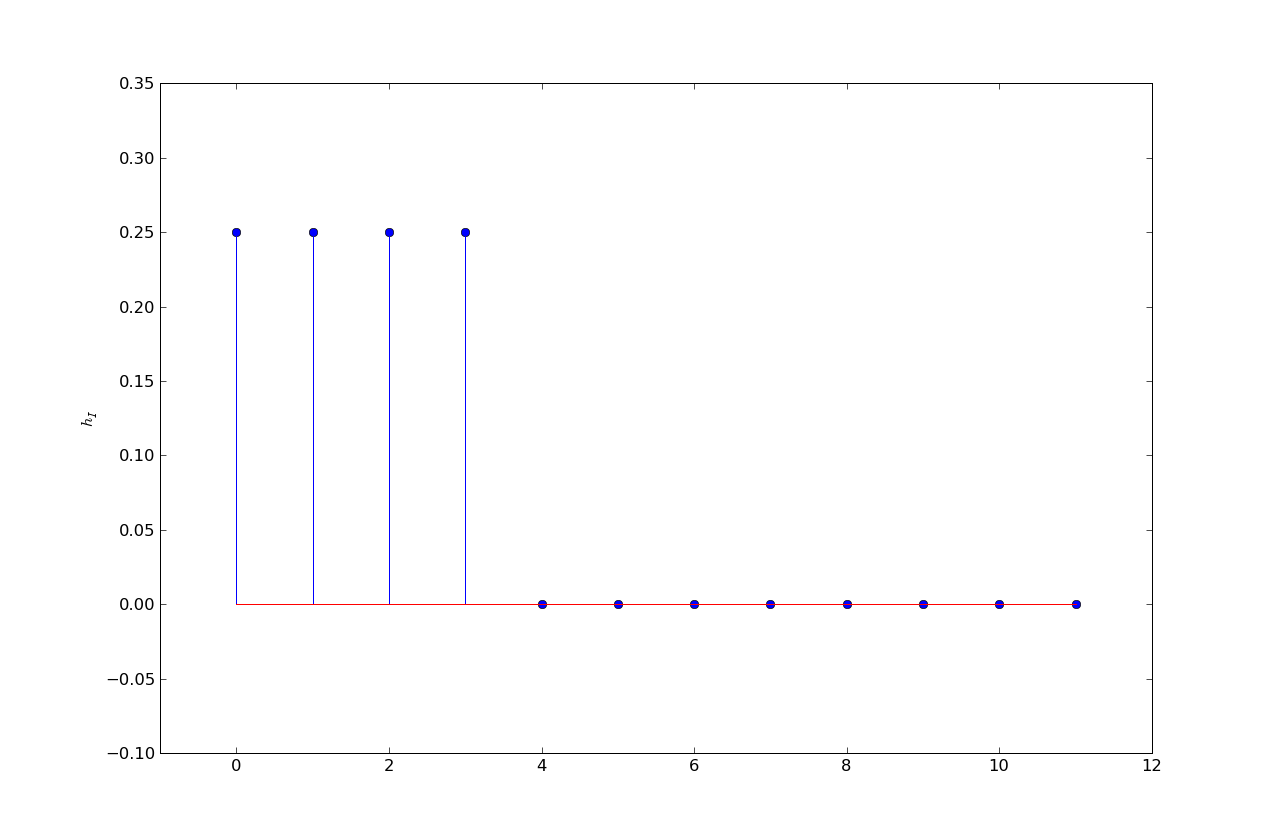

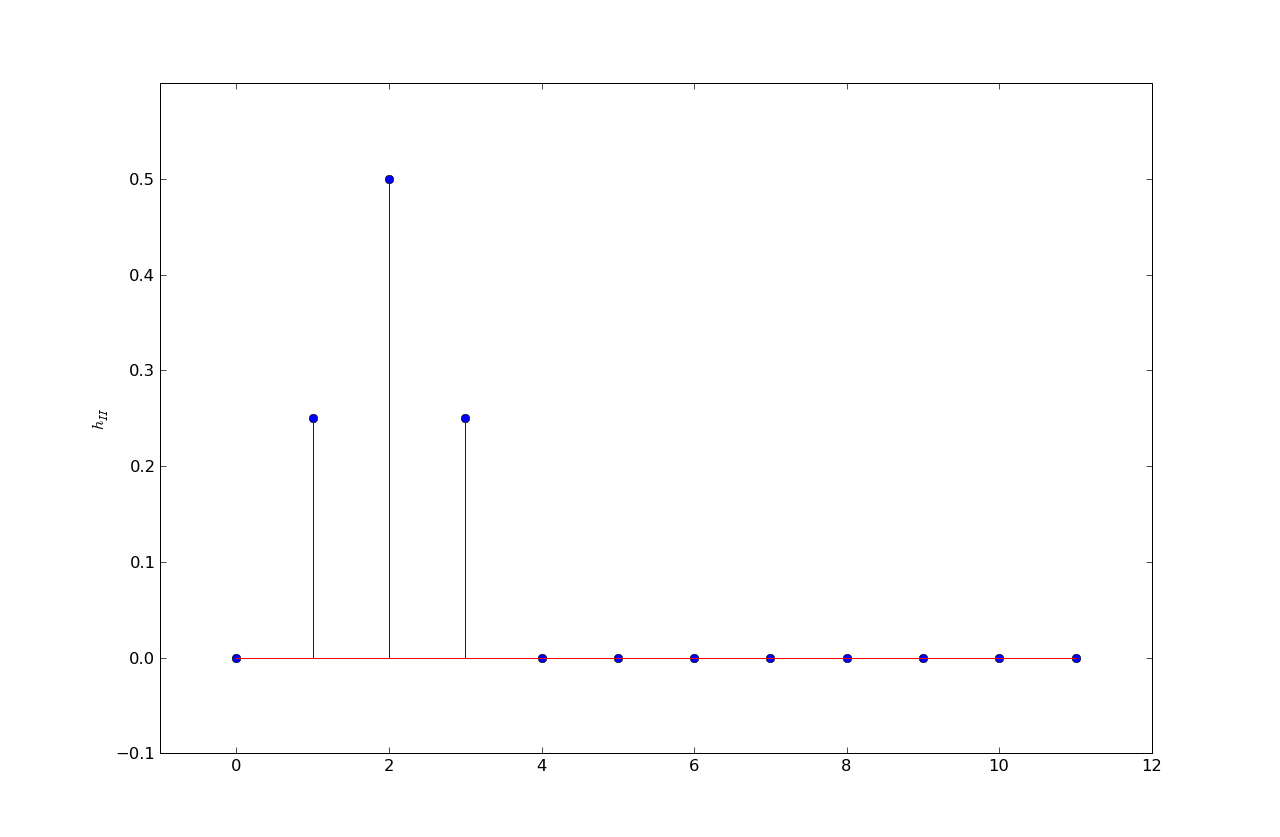

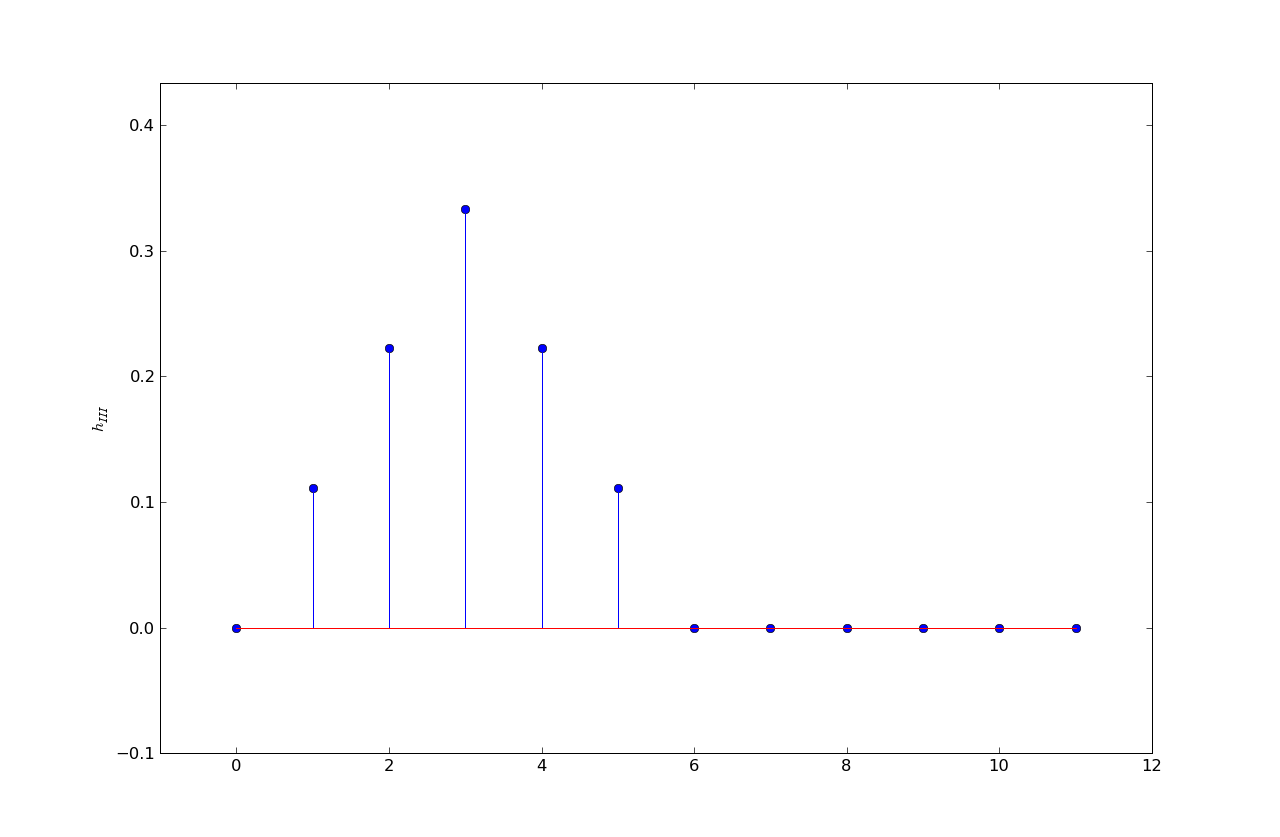

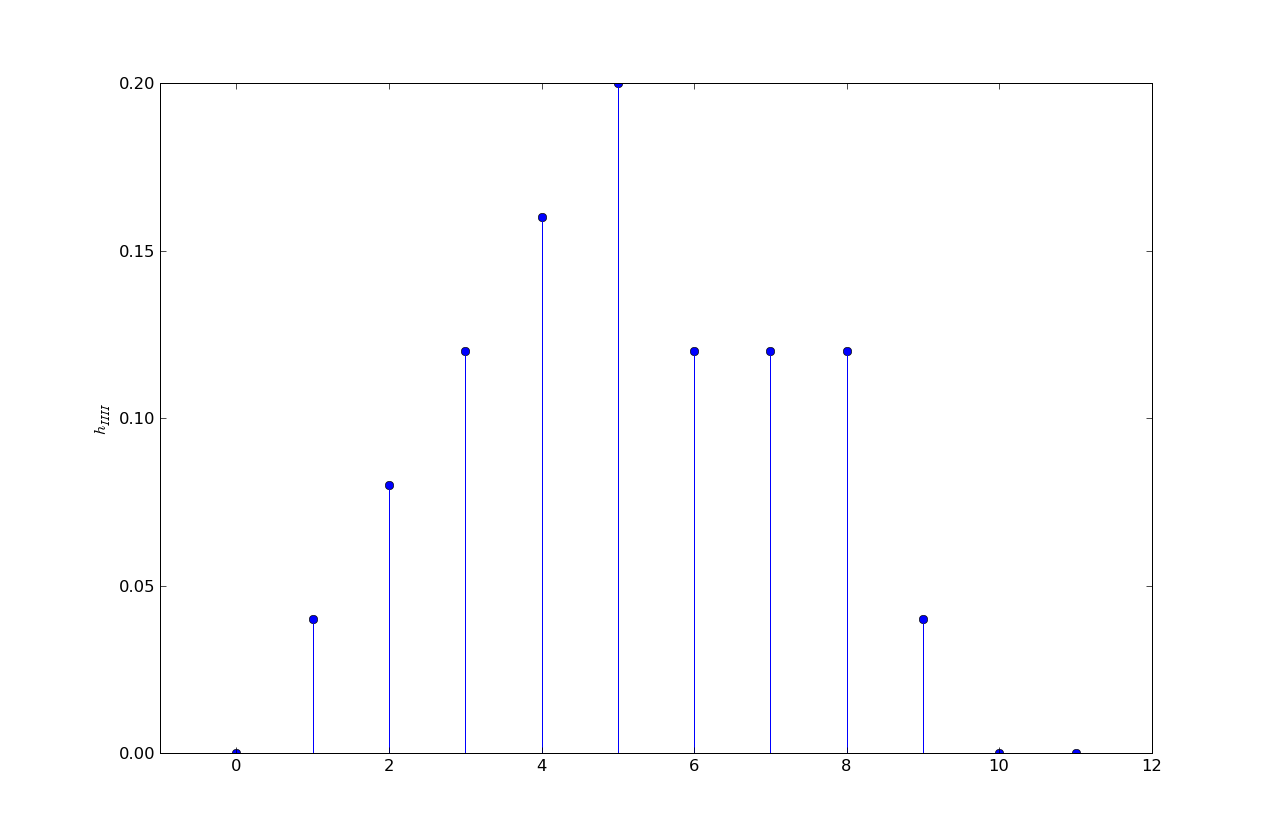

Suppose four different wires {I,II,III,IIII} have four different unit sample responses:

h1 = .25, .25, .25, .25, 0, ...

h2 = 0, .25, .5, .25, 0, ...

h3 = .11, .22, .33, .22, .11, 0, ...

h4 = .04, .08, .12, .16, .20, .12, .12, .12, .04, 0, ...

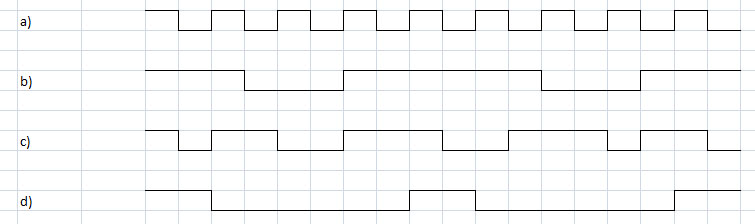

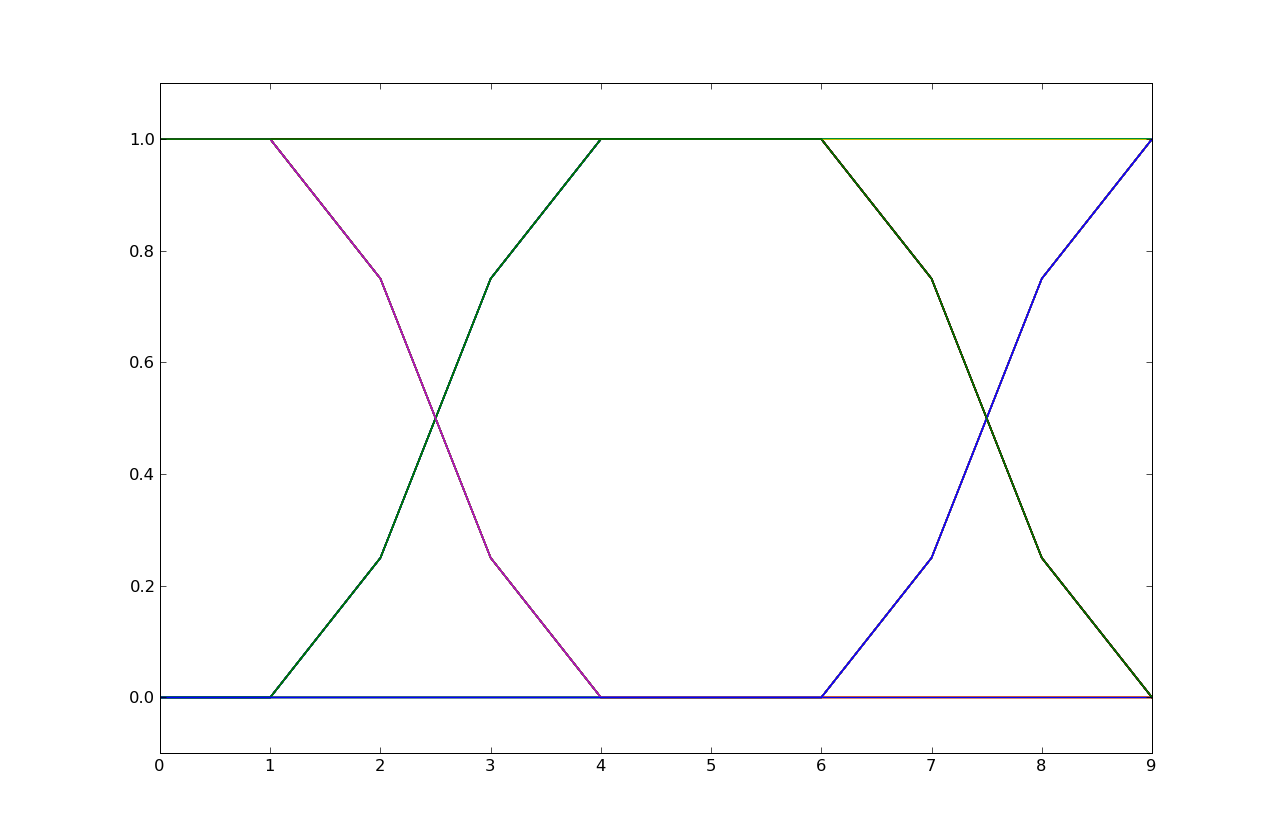

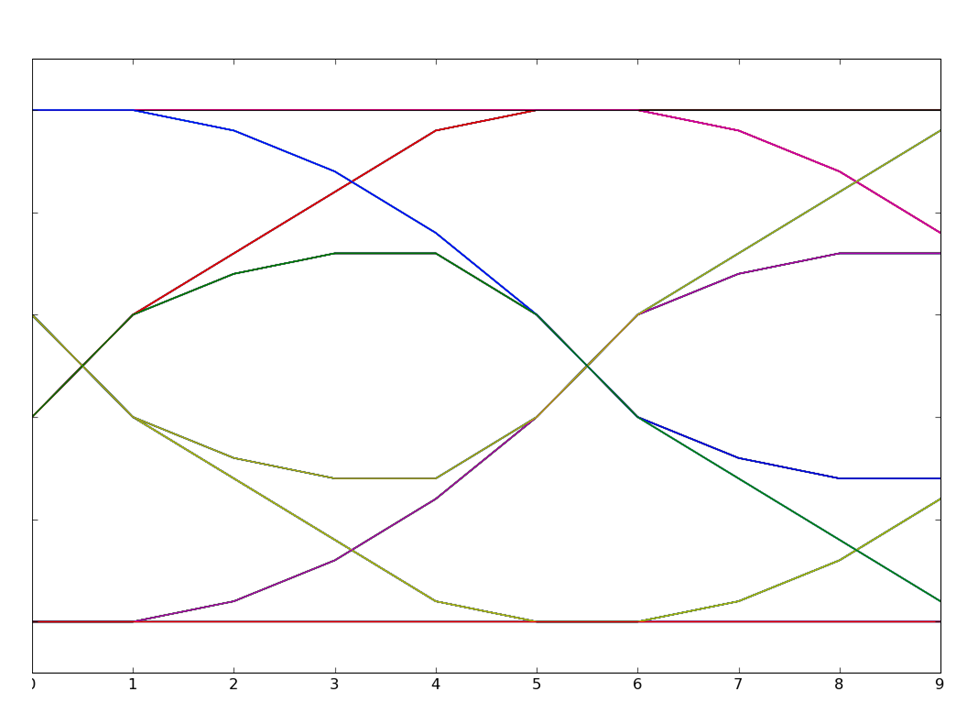

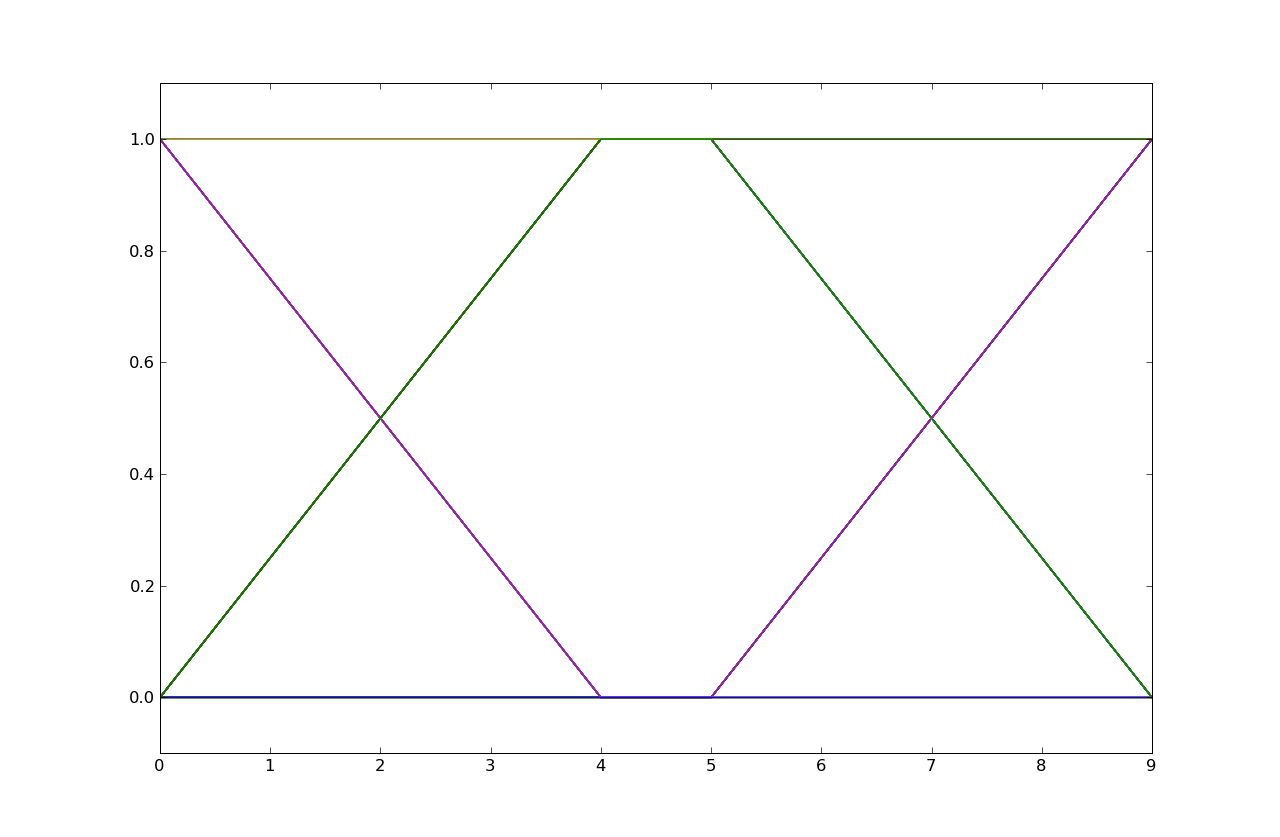

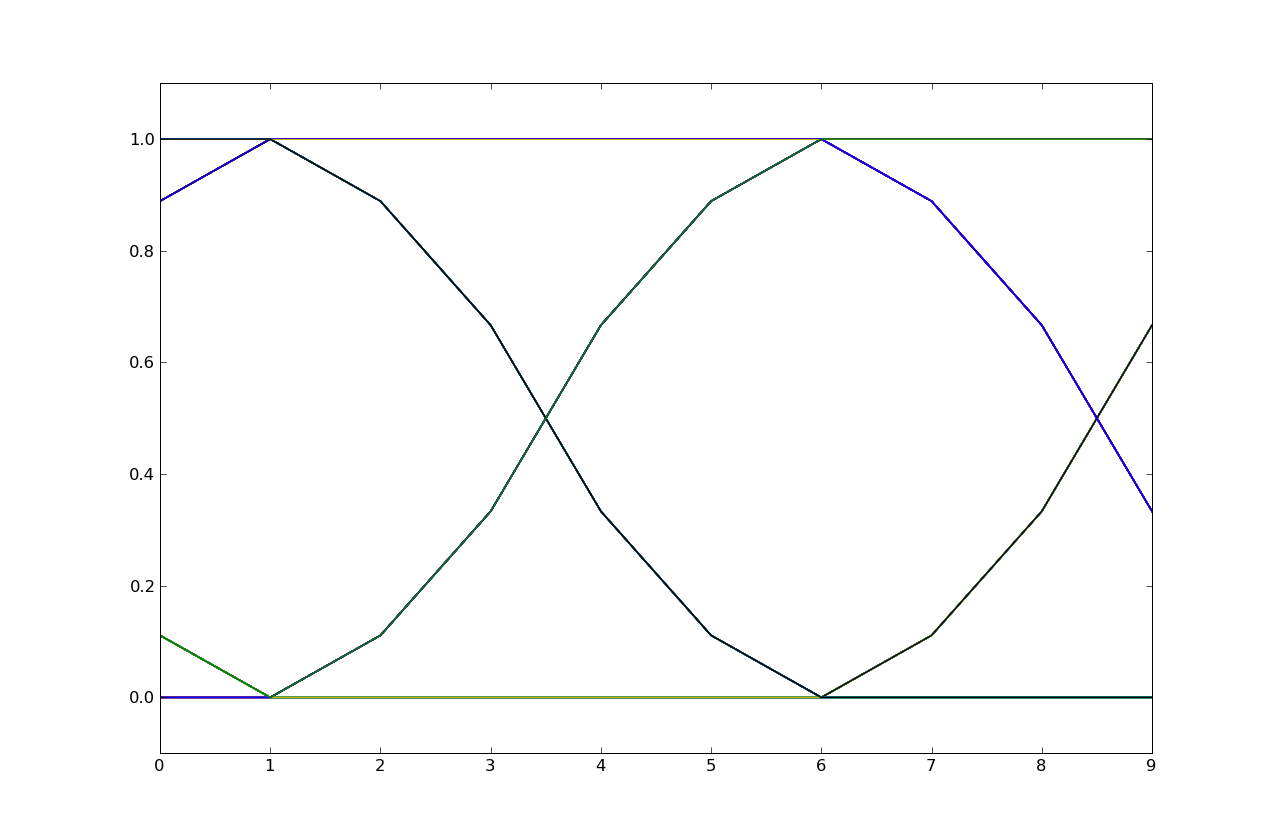

Each of the following eye diagrams is associated with transmitting bits using one of the four wires, where five samples were used per bit. That is, a one bit is five one-volt samples and a zero bit is five zero-volt samples. Please determine which wire was used in each case.

The eye diagram is from h2. Note that the signal transitions take three samples

to complete and that the transitions occur in 3 steps with a larger slope

in the middle step, an indicator of a response with 3 taps with a larger middle

tap.

The eye diagram is from h2. Note that the signal transitions take three samples

to complete and that the transitions occur in 3 steps with a larger slope

in the middle step, an indicator of a response with 3 taps with a larger middle

tap.

The eye diagram is from h4. Note that the signal transitions take more than five samples

to complete and hence result in considerable inter-symbol interference. Response h4

is the only response that's non-zero for more than 5 taps.

The eye diagram is from h4. Note that the signal transitions take more than five samples

to complete and hence result in considerable inter-symbol interference. Response h4

is the only response that's non-zero for more than 5 taps.

The eye diagram is from h1. Note that the signal transitions take four samples

to complete and that the transitions have constant slope, an indicator of

a response with 4 equal taps.

The eye diagram is from h1. Note that the signal transitions take four samples

to complete and that the transitions have constant slope, an indicator of

a response with 4 equal taps.

The eye diagram is from h3. Note that the signal transitions take five samples

to complete and that the transitions occur in 5 steps with larger slopes

in the middle of the transition, an indicator of a response with 5 taps with larger middle

taps.

The eye diagram is from h3. Note that the signal transitions take five samples

to complete and that the transitions occur in 5 steps with larger slopes

in the middle of the transition, an indicator of a response with 5 taps with larger middle

taps.

Problem .

Consider the second of the four eye diagrams in Problem 10. Determine the eight unique voltage values

for sample number 8.

If we convolve h4 with a unit step we get the unit-step response:

Now if we consider all possible values of the current bit and the previous

two bits (listed earliest-to-latest in the table below) we can use superposition

of hstep to compute the possible values at sample time 8, which is the same

as asking for y[12]. Note that you have to think about how the sample

numbers in the eye diagram align with the sample numbers of the bits -- the

eye diagram is not necessary aligned with bit boundaries (e.g., it isn't

in this case).

So the eight unique values for y[12] are 0, .12, .16, .28, .72, .84, .88 and 1.

n 0 1 2 3 4 5 6 7 8 9

hstep[n] = 0.0, 0.04, 0.12, 0.24, 0.40, 0.60, 0.72, 0.84, 0.96, 1.00, ...

bits decomposed into unit steps computation for y[12] 1 1 1 u[n] y[12] = hstep[12] = 1 1 1 0 u[n] - u[n-10] y[12] = hstep[12] - hstep[2] = 1 - .12 = .88 1 0 1 u[n] - u[n-5] + u[n-10] y[12] = 1 - .84 + .12 = .28 1 0 0 u[n] - u[n-5] y[12] = 1 - .84 = .16

0 1 1 u[n-5] y[12] = .84 0 1 0 u[n-5] - u[n-10] y[12] = .84 - .12 = .72 0 0 1 u[n-10] y[12] = .12 0 0 0 y[12] = 0

Problem .

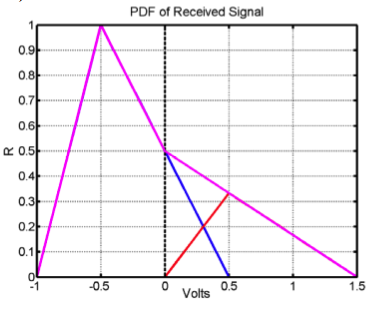

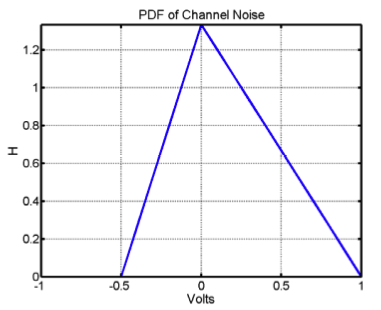

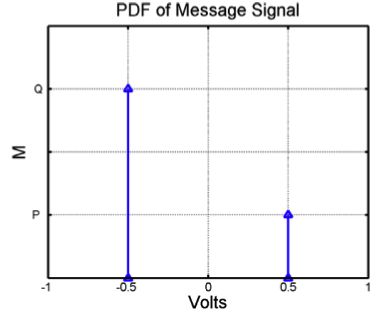

Messages are transmitted along a noisy channel using the following protocol: a "0" bit is transmitted as -0.5 Volt and a "1" bit as 0.5 Volt. The PDF of the total noise added by the channel, H, is shown below.

We know that P=3Q and that P+Q=1, so P=0.75 and Q=.25.

We know that P=3Q and that P+Q=1, so P=0.75 and Q=.25.