The duration elapsed is the time difference between the current time and the time of the note. When a pattern of 5 consecutive notes is constructed, the duration fields will be [4, 3, 2, 1, 0], and they will match exactly any other pattern of consecutive notes. However, there is no constraint on which data will enter a pattern.

For example, a pattern table can have the first element come from voice X, the second from voice Y, and the third from voice Z. A pattern in that table could be "voice X falls 2 notes ago, voice Y rises 10 notes ago, and voice Z has maximum pitch now". In that case the duration fields will be [2, 10, 1] for voices [X Y Z]. The pattern will also match [2, 8, 1], where Y rose 8 notes ago instead. This allows the system to match a larger class of patterns independent of the exact time of occurence, and which voice the notes belong to.

If such a duration field is not included, one has to train the system to recognize only cases where a pattern depends on exact time relationships. This varying duration field allows for more dynamic relationships between voices. Since pattern tables are created dynamically, depending on what data is salient, the relationships to look for need not be hard-coded. A different pattern table will be created for different combinations of notes, but that's another story, which belongs to the pattern matching domain.

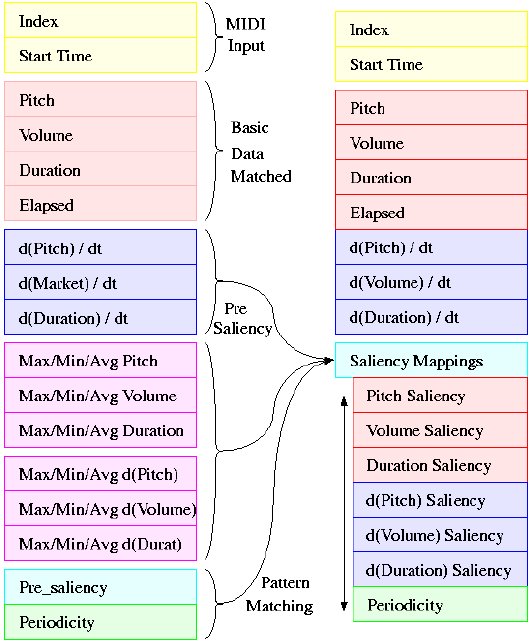

On the diagram to the left, the yellow boxes represent the data received directly by the MIDI file. In the red boxes lies the transformed data, as described above, that will be used in matching patterns. The particular voice is not matched within the pattern element, but is encoded in the pattern table. Within a pattern table, the same element will always come from the same voice X.

The input vector is augmented by the derivatives of the three input values, represented in blue. Only the basic values and their derivatives are matched when comparing elements. The saliency values are simply there to determine what is important when making a match, but they are not matched themselves.

Temporarily, maximum, minimum and average values of each of the six fields are calculated for the window-size (magenta), and they are used to calculate the saliency maps (cyan). The window size represents how far back in the past we should look for normalizing values when comparatively analyzing the current data. It is relative to those min max and avg values that values are matched within patterns, as will be described later on. They also serve as a normalization step, so that data given in different units can be compared in an unbiased way.

Along with the other saliencies, a periodicity saliency is calculated, which makes salient notes based on a periodically salient history (green).

Thus we have reached from a 3-value input (pitch, volume, time) a 7-value vector (pitch, volume, duration, their derivatives, and time elapsed) to match upon. Adding periodicity, each vector also has an 8-field saliency, which determines what values should be matched more carefully.

On to Saliency or back to overview.