9.35 Illusion Laboratory

Spring 2021 Auditory Lab

How is speech grouping affected when the binaural cues (ILDs) are changed in a way that does not reflect naturalistic scenarios?

In Demo #7, we heard the effect that different binaural cues had on grouping: if a continuous tone and a masking noise were both presented to the same ear, we perceived that the tone disappeared into the noise; however, if the tone was presented to one ear and the masking noise to both, we perceived that the tone continued through the masking noise because we interpreted each as coming from different locations and therefore being different streams. In this illusion, we sought to determine how changing the binaural cues of a speech audio in different ways affected our ability to group the sounds into a single stream of speech. We hypothesized that our ability to understand the speech would decrease somewhat but not completely because we are highly attuned to language, and this would probably offset the effect of the different ILD’s.

Use headphones please.

1. Alternating the sound quickly between the ears made the sounds rough but kept the sentences understandable:

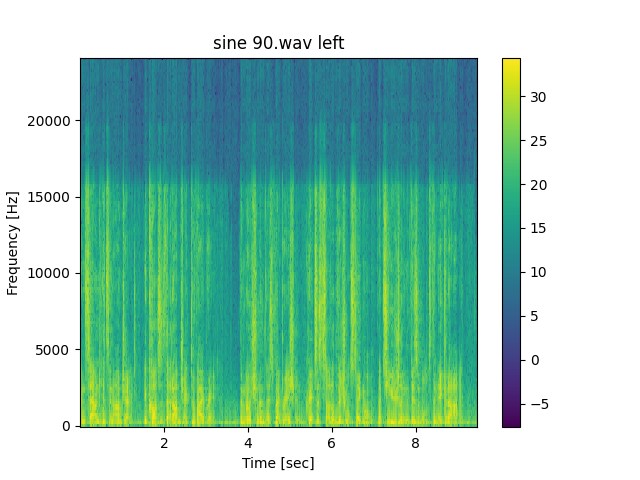

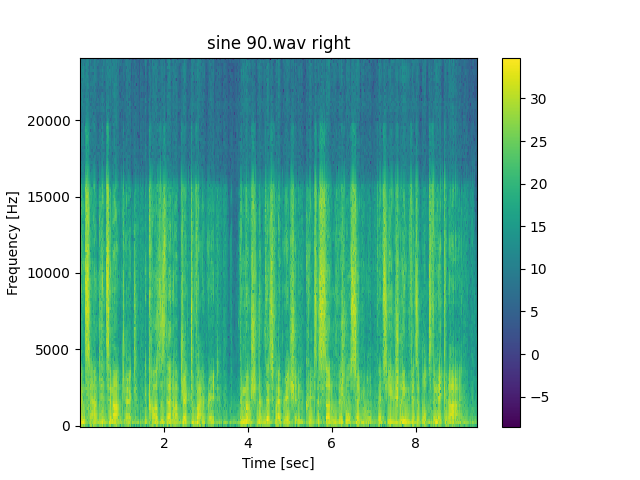

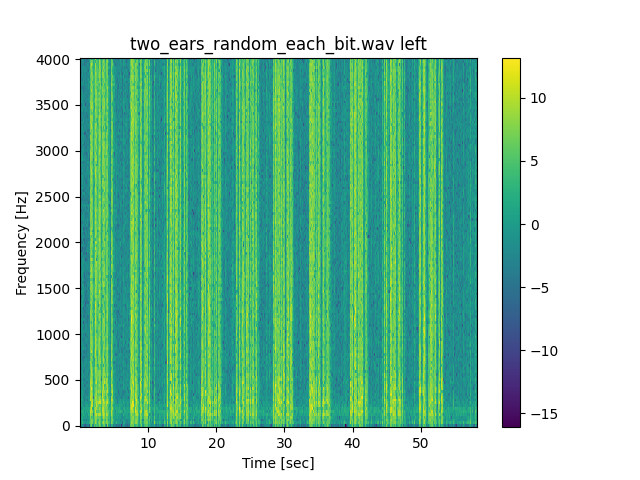

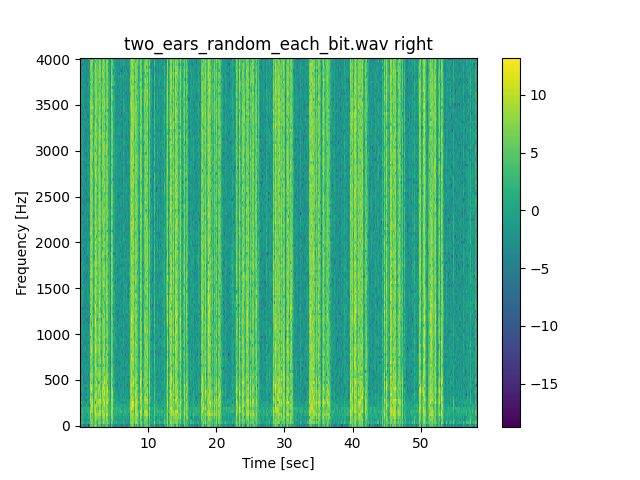

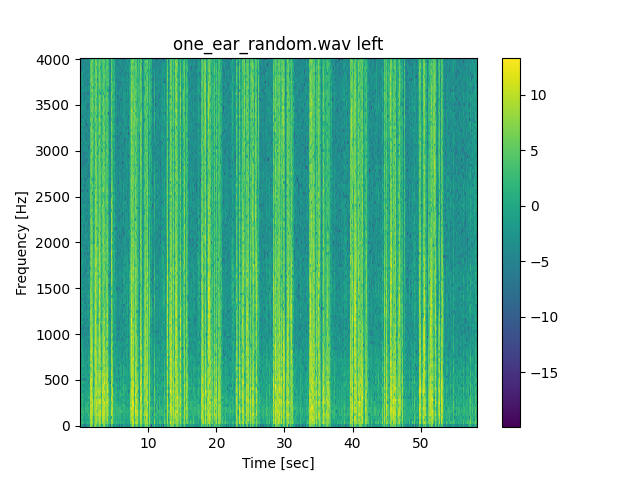

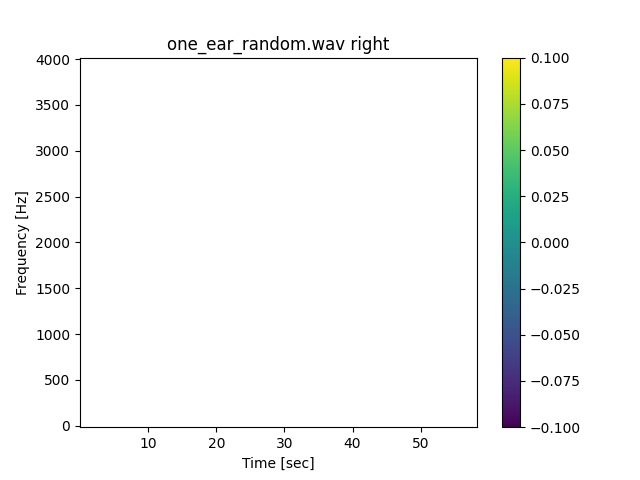

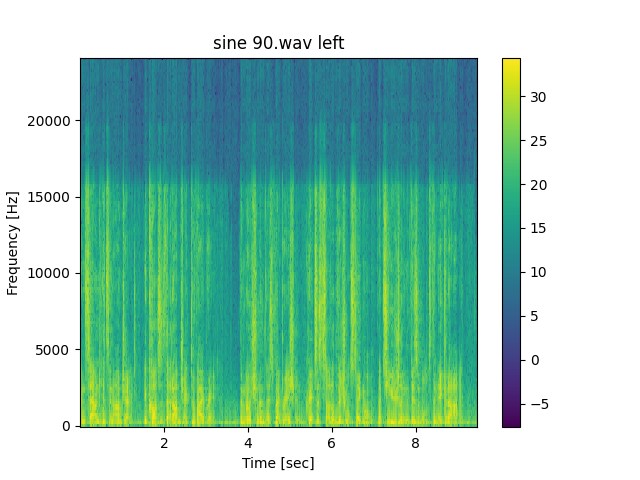

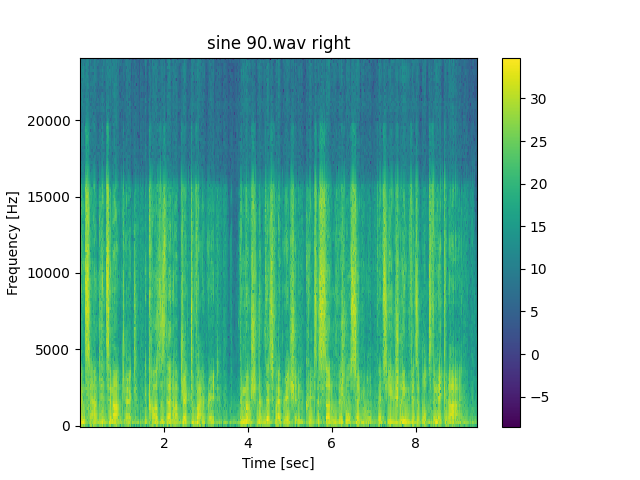

In the first audio, we took a sentence and distributed its sound between the ears using two sine waves, one for each ear, that each peaked when the other troughed, and we made the frequency of the waves 90 Hz. This meant that the sound transferred from one ear to the other smoothly but quite unnaturally, as sound doesn’t usually travel through the inside of your head to go from one ear to the other. In the second audio, we took each bit of sound and with uniform probability assigned it to either play in the left or the right ear. What we found is that we could still understand the sentences pretty well, and we believe that this is partly because what each ear misses is so small, that our brain fills in the gaps.

90 Hz panning sine wave:

Randomly assigned samples:

2. It’s easier to understand a sentence via a stereo sound with unnatural ILD’s than via a sound that plays in only one ear that is missing the same expected number of bits that each ear is missing in the stereo sound.

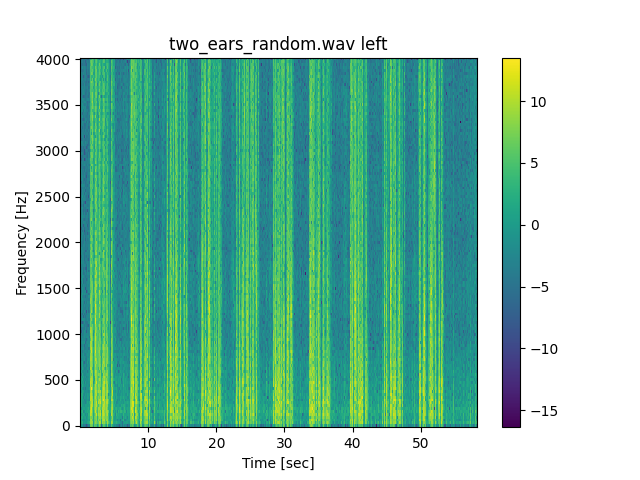

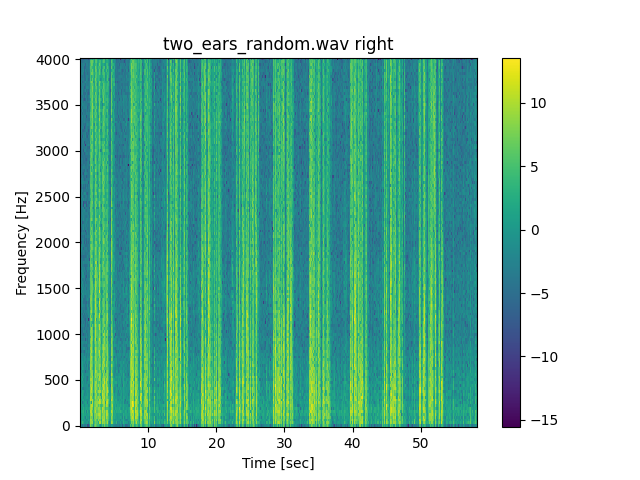

After the last demo, we theorized that our brain may fill in the gaps of silences that occur in each ear when we alternate sound locations, so we wanted to ask whether the ears actually worked together when deciphering a sentence. For this demo, we also used randomized assignments, but instead of assigning each bit separately, we assigned each chunk of 8 bits randomly to each ear. This is because we wanted the gaps to be more perceivable. In the first audio, we keep the right sound silent but play the left sound with randomly eliminated chunks of 8 bits. If we’re only relying on our brain to fill in the gaps of silence and not the input to both ears to work together, this should allow us to understand the sentences equally well. In the second audio we play the stereo sound. We found that it was easier to understand the stereo sound than the sound for just the left ear. We believe that this indicates that despite the fact that we expected each stream to be treated separately because of the differences in their locations, both ears still work together to create a single stream of noise.

Randomly assigned 8 sample chunks, left ear only:

Randomly assigned 8 sample chunks:

3. Whether we perceive the transfer of sound from one ear to the other smoothly depends on the speed of transfer.

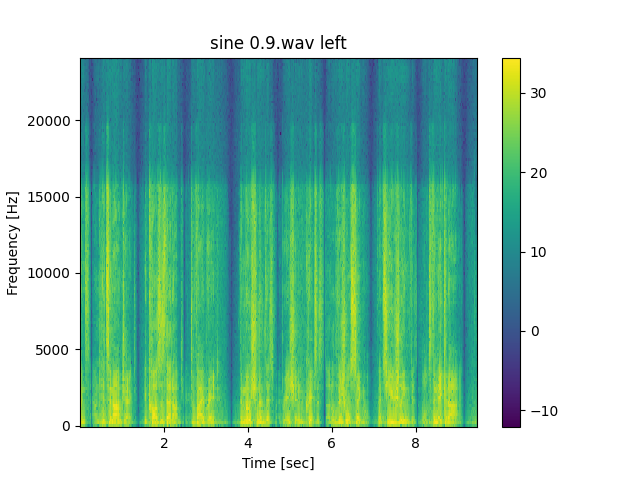

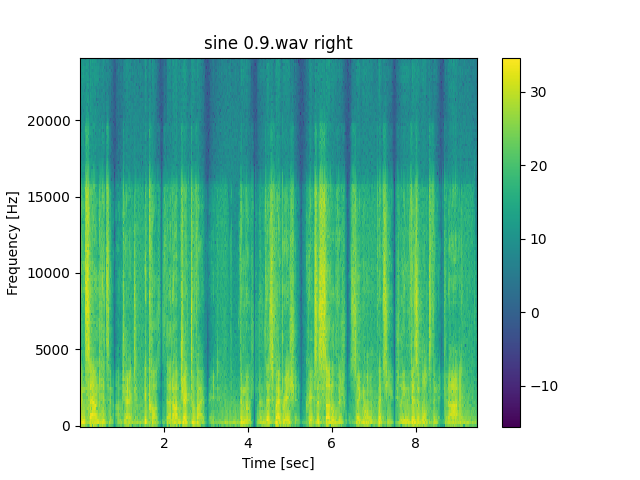

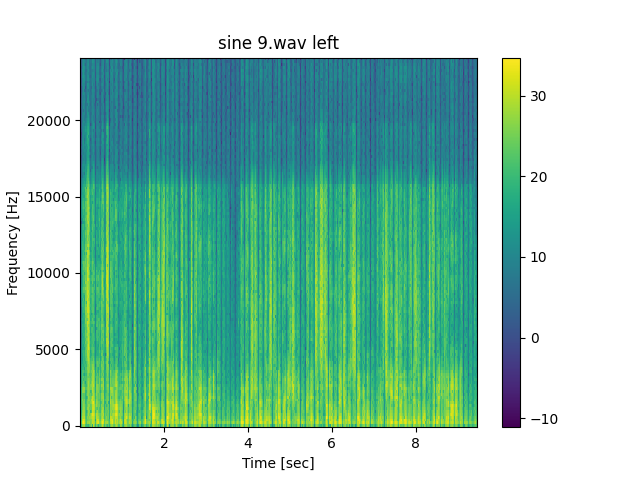

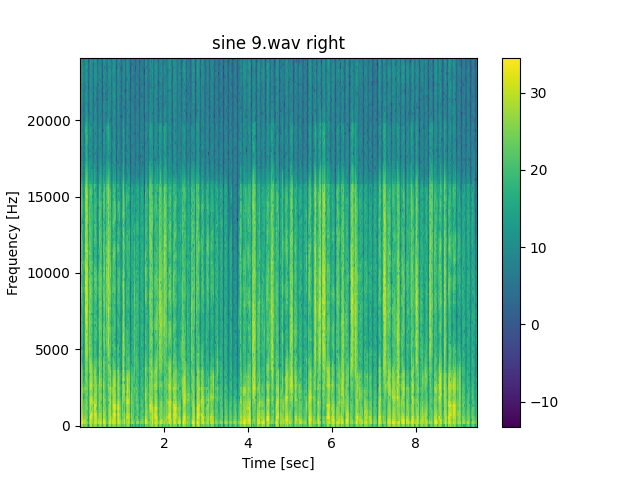

In this part, we used the sine wave transfer from part 1, but varied the frequencies to have audios of 0.9 Hz, 9 Hz, and 90 Hz. We found that for 0.9 Hz, we could clearly perceive that the sound traveled through our head from one ear to the other, but that for 90 Hz, we heard a single, rough sound. The same thing happened with the audios of random assignment. We believe that this may indicate that if a sound transfers too fast, our brain perceives the average location.

0.9 Hz panning sine wave:

9 Hz panning sine wave:

90 Hz panning sine wave:

4. When the bits were randomly assigned, we perceived a beating sound, which was faster and louder if the transfer happened more often, up until a certain point.

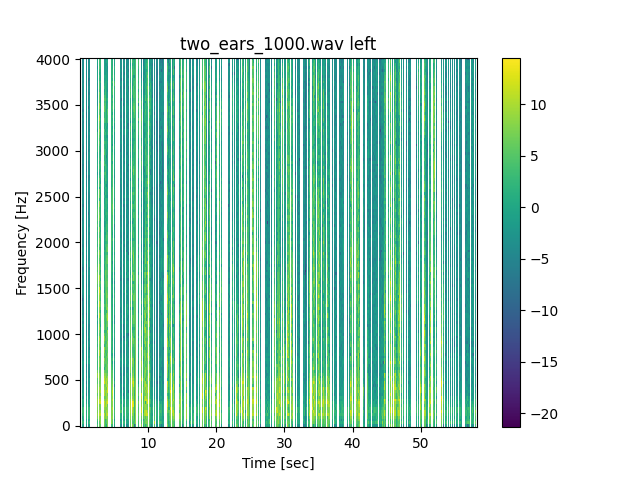

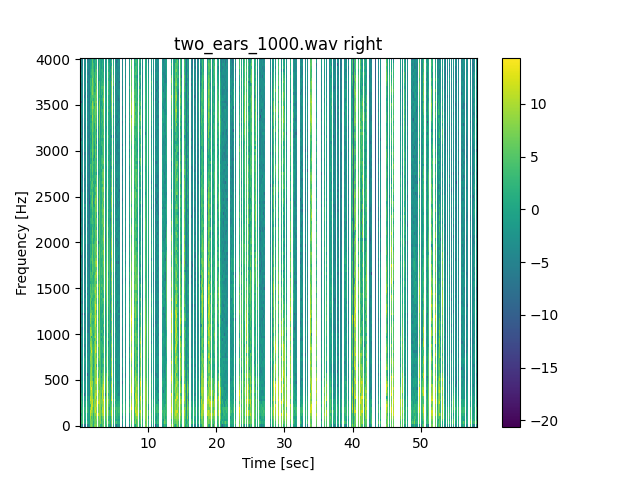

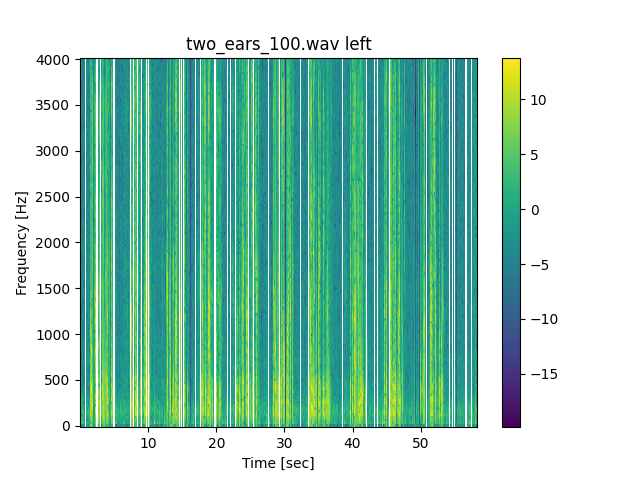

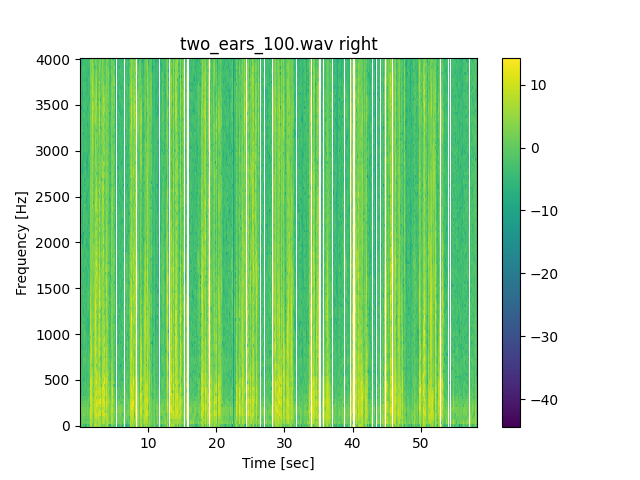

In the first audio, each chunk of 1000 audio bits is being randomly assigned, and we perceived a beating sound at each transfer. In the second audio, each bit of 100 audio bits is being randomly assigned, and we perceived a louder, more intense beating sound. In the last audio, each individual bit was assigned, and the sound is rough, but we don’t hear loud beats as in the previous one. We believe that this supports our theory that if a sound transfers too fast, our brain perceives the average location.

Randomly assigned 1000 sample chunks:

Randomly assigned 100 sample chunks:

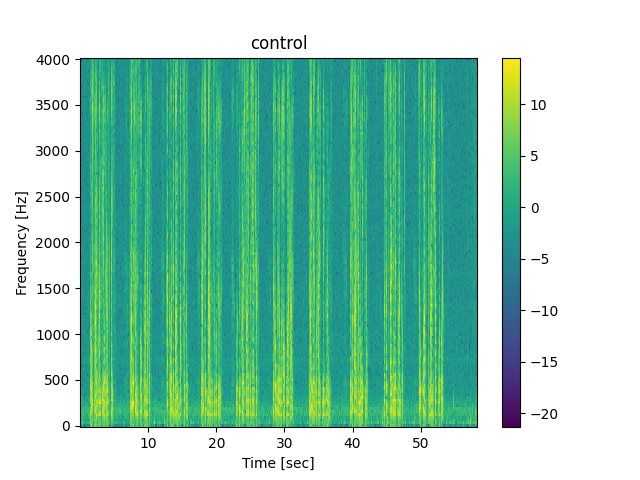

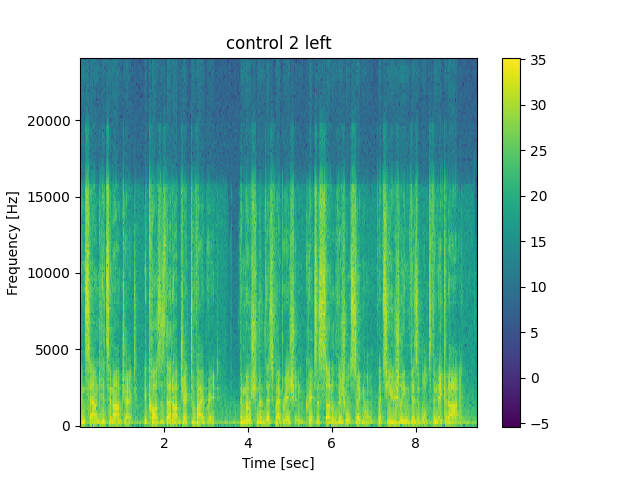

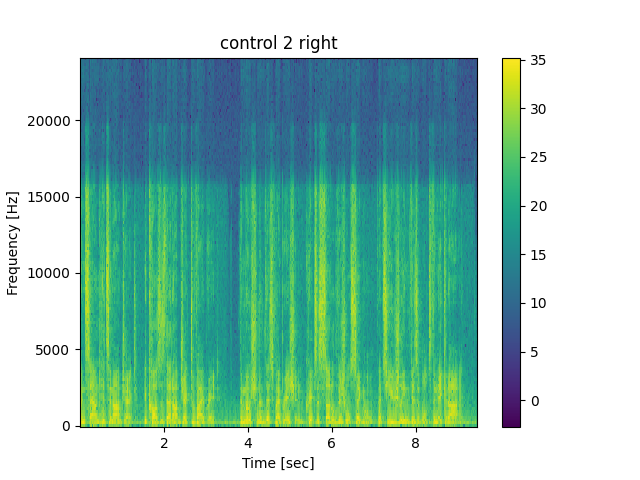

5. Controls:

Series of sentences used for random assignment audio:

Series of sentences used for sine panning audio:

Works Cited

Hirsh, I. J. (1948). The influence of interaural phase on interaural summation and inhibition. The Journal of the Acoustical Society of America, 20(4), 536–544. https://doi.org/10.1121/1.1906407

Remez, R. E., Rubin, P. E., Berns, S. M., Pardo, J. S., & Lang, J. M. (1994, January). On the Perceptual Organization of Speech. Psychological review. Retrieved March 16, 2023, from https://pubmed.ncbi.nlm.nih.gov/8121955/

Open Speech Repository |. (n.d.). Www.voiptroubleshooter.com. Retrieved March 17, 2023, from https://www.voiptroubleshooter.com/open_speech/american.html

Abe Davis’s Research. (2014, August 4). The Visual Microphone: Passive Recovery of Sound from Video. Www.youtube.com. https://www.youtube.com/watch?v=FKXOucXB4a8