| Vol.

XXX No.

1 September / October 2017 |

| contents |

| Printable Version |

Teach Talk

How Deeply Are Our Students Learning?

We write about a long-standing global issue related to students’ mastery of fundamental STEM knowledge. Namely, when students are asked questions that test deep understanding, they often guess and merely manipulate symbols without insight – even though they can solve traditional homework and exam questions.

At a time of great change in education, we should solve this problem first in order to place subsequent educational changes on solid ground. We do not blame the students. Rather, it is a problem that we as a faculty have created and have the responsibility, and perhaps the knowledge, to solve. Here are two examples of the phenomenon, which we find in every field of introductory science and engineering that we have taught, including dynamics, computation, calculus, and signals and systems.

-

Students who can manipulate the algebra for complicated statics and dynamics problems often cannot use Newton’s laws and free-body diagrams, the bases of dynamics, to model the world. - Students who can evaluate integrals with facility often cannot set up the same integrals

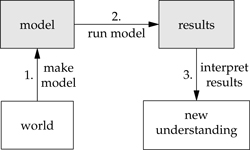

from a description of the underlying physical process. Students live in the upper world of mathematics, where they competently execute the second step: running the model. However, they have much more trouble with the surrounding skills: making the model and interpreting its results. For them, mathematics is not a tool for understanding or changing the world.

from a description of the underlying physical process. Students live in the upper world of mathematics, where they competently execute the second step: running the model. However, they have much more trouble with the surrounding skills: making the model and interpreting its results. For them, mathematics is not a tool for understanding or changing the world.

The problem seems neither limited to our students nor to the United States. We have seen the same phenomenon at other selective institutions, including Olin College and the University of Cambridge. And the research in this area that we will describe ranges from the UK to North America (Berkeley, University of Washington, Harvard). The extended range of the problem might even be welcome, for we can benefit from shared and wide experience. Noted physicist and science educator Carl Wieman, in an NPR interview about his recent book on transforming science education [19], described noticing the same problem 30 years ago: “I was surprised . . . . [T]he [graduate] students coming in [to] my physics research lab – they had done great in all these courses but they didn’t seem . . . to know how to do physics.” [“The College Lecture? Nobel Laureate Gives it a Failing Grade,” KQED Radio, May 25, 2017, online at https://ww2.kqed.org/forum/2017/05/24/nobel-laureate-carl-wieman-wants-to-to-end-the-college-lecture/ at 24:30.]

The physics-education research community has investigated this area [1, 6, 7, 9, 10, 13]. At MIT, the TEAL project in physics [3, 4] showed that the problem also occurred at MIT, and the project developed conceptual questions to improve students’ understanding of mechanics and electro-magnetism. In this article, we amplify that conclusion, indicating that the problem of rote symbol manipulation remains deep and more widespread than we as a faculty have thought and that current solutions have been insufficient. We also discuss why the severity of the problem is not widely appreciated, its consequences for our students, and the benefits of solving it.

1. Examples

In the first examples below, we focus on dynamics (motion and force), which has the most extensive studies. However, as we then illustrate with examples from programming and circuits, the phenomenon seems discipline agnostic.

1.1 Motion

Fundamental to modern physical science and engineering is motion: its prediction and production. Here we give examples of students’ difficulties even describing motion. The first comes from J. W. Warren, who taught physics at Brunel University in London. He asked engineering and science majors first to identify the vectors among speed, velocity, and acceleration. Then he gave them the diagram shown and the following problem [17]:

.jpg)

A particle moves in the path shown, the speed increasing uniformly with time in the semicircular section, from 10 m/s to 12 m/s. For this section of the path calculate the averages of (a) the velocity

(b) the acceleration. (Use π = 22/7.)

Warren reported [18, p. 2] that “correct answers to these questions are practically never obtained.” We have tried this question with second- and third-year students at Olin and MIT and can confirm Warren’s lament. In the Olin course (Mechanical Engineering Dynamics), one student (out of 24) solved part (a) correctly, and another student solved part (b) correctly. In the MIT course (16.07: Aero/Astro Dynamics), one student (out of 35) solved part (b) correctly, and no student solved part (a) correctly.

.jpg)

A second example comes from Reif and Allen at Berkeley and their colleagues at the University of Washington. They asked students to give the acceleration (as a vector) of a pendulum bob at five points as it swings between the extremes at A and E. Their results are sobering [13, p. 19]:

Of 124 students who had studied acceleration in the introductory physics course, none could answer this problem correctly; of 22 graduate-student teaching assistants, only 15% could answer it correctly; and of 11 graduate students on their PhD qualifying exam, only 20% could answer it correctly.

1.2 Force

As important as motion is its cause, force – where students’ understanding also falters. A fundamental aspect of force is that it is one side of an interaction between two objects. To test students’ understanding of this property, Terry and Jones [15] in the UK gave four problems to 16-year-old students after O-level Physics (roughly comparable to AP Physics). Here is one problem.

.jpg)

The diagram shows a person standing on the ground and the gravitational force Fg of the earth on the person. What is the Newton’s-third-law force that is paired with Fg?

Only two students out of 39 correctly answered with the gravitational force on the earth. Of the 37 wrong answers, two-thirds were the contact force – indicating students’ deep confusion between Newton’s second and third laws. We have found similar results: At Olin, in Mechanical Engineering Dynamics, the correct force was named by one out of 24 students.

We have also asked a second-law question of students in 2.003 (Mechanical Engineering Dynamics), in 16.07 (Aero/Astro Dynamics), and in Mechanical Engineering Dynamics at Olin.

A small steel marble with mass m is dropped 1 meter onto a steel table, from which it bounces upward and almost perfectly elastically. At the instant during the bounce when the ball’s center of mass is stationary, what force does the table exert on the ball? (a) 0 (b) mg (c) 2mg (d) >2mg

The correct answer, F > 2mg, was chosen by only 20 percent of our students. The rest of the answers are mostly divided between mg, which violates Newton’s second law, and 2mg, which, while possible theoretically, does not represent any steel ball bouncing in the real world.

When a problem combines motion and force, students’ confusion deepens. Again we turn to Warren [17]. His study was conducted in 1969, when the standard of A-level Physics was very high [2] (much higher than AP Physics). Asked about a car moving at constant speed in a circle with no wind blowing, science and engineering majors (most of whom would have taken A-level Physics) were asked for a diagram showing the resultant force, the friction force exerted by the ground on the car, and labeled arrow(s) for any other force(s) on the car (all in the horizontal plane).

In only 47 out of 148 diagrams was the resultant force the sum of the other forces, of which only 14 showed the correct, radially inward resultant. Only 3 students correctly drew the friction force’s direction. And only 13 students gave the other force correctly as air resistance. The most common answer was the centrifugal force (51/148), followed by the “driving or tractive” force (31/148), followed by the centripetal force (27/148). Our results, with mechanical-engineering students taking Dynamics, have been almost identical.

1.3 Programming

Lest we be misinterpreted as claiming that all troubles lie with physics and mechanical engineering, we quote the results of the rainfall problem. In the 1980s, Soloway [14] asked students, at the end of a one-semester course in computer science, to write a program that averages the nonnegative values in a list, stopping at the end of list or at the first -999 marker. It is a simple program. Yet, across the country, only about 30 percent of students write a correct algorithm (even allowing syntax errors). We have found similar percentages in 6.01 (Introduction to EECS I) as a pre- and a post-test. Thus, our own teaching did not help.

Mark Guzdial, a professor of computer science at Georgia Tech, asks [“A Challenge to Computing Education Research: Make Measurable Progress,” August 16, 2010, https://computinged.word-press.com/2010/08/16/a-challenge-to-computing-education-research-make-measurable-progress/]:

As computing education researchers, we have both goals, to understand and to improve. After 30 years, why hasn’t somebody beaten the Rainfall Problem? Why can’t someone teach a course with the explicit goal of their students doing much better on the Rainfall Problem – then publish how they did it? We ought to make measurable progress [original emphasis].

The rainfall problem parallels the lore about the “FizzBuzz” problem: to write a program that prints the numbers from 1 to 100, one per line, with a multiple of 3 replaced by “Fizz,” a multiple of 5 by “Buzz,” and a multiple of 3 and 5 by “FizzBuzz.” The problem is successful enough at filtering applicants for software-developer positions that it is regularly used in early-stage interviews. We have not tried the problem, but it further indicates the pervasiveness of, and the need to ferret out, fundamental conceptual misunderstandings.

1.4 Manipulation without understanding

The next group of examples illustrates that students can perform mathematical operations that they do not necessarily understand. As one manifestation, they can evaluate mathematical expressions that they cannot generate as a model. In this area, we do not know of a body of research but draw on our experiences.

Many areas of engineering, such as signals and systems and controls, use the idea of convolution. It typically leads to integrals of the form

![]()

Given two reasonable functions f and g, our students can usually evaluate these convolution integrals. However, from a description of a physical process that produced the convolution (for example, a signal going through a filter), they cannot set up the same integral.

Similarly, they can evaluate integrals to calculate complicated moments of inertia (say, for a solid sphere). But, for much simpler moments, they often cannot even set up the integral.

.jpg)

As an example, on a recent Dynamics exam, students were asked to supply the missing integrand and limits to compute the moment of inertia of a uniform a×b×c rectangular prism rotating about the z axis through the prism’s center. Almost no student gave the correct integrand, which is just x2+y2. The expressions offered instead, such as x2+y2+z2, indicate that, for the student, moment of inertia has little physical meaning. As teachers, we must do better!

.jpg)

A similar example of students’ difficulty making models is in comparing two medical treatments using Bayesian statistics. To compute the probability that one treatment is better than the other, one integrates a joint probability density over the shaded region, where y>x and x,y<1. In our experience, few students can determine the limits on the double integral – although, given the limits (the model), they readily evaluate the integral.

.jpg)

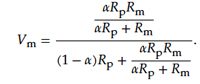

A related manifestation of the same problem is that students can make mathematical models that they cannot use. An example comes from the circuits unit in 6.01. The students had, in small groups, built working 0-to-10-volt outputs controlled using a variable resistor with total resistance Rp=5000 ohms.

Students had also powered a LEGO motor with an official 10-volt supply, finding that the motor turned on even at 0.3 volts. And, unpowered, it had a resistance of 5 ohms.

.jpg)

Now they connected the motor to their own 0-to-10-volt output. Yet the motor would not turn on, no matter how they turned the dial on the variable resistor. Their problem was to explain what went wrong. Almost without exception, they applied the parallel-resistance and voltage-divider formulas several times and got this monstrous expression for the motor voltage:

This result was too complicated to offer any understanding of why the motor would not turn on, so students simply plotted the output voltage using a symbolic-mathematics package or service (such as Wolfram Alpha). As Victor Weisskopf, formerly chair of the Physics Department, often said (quoted by Sherry Turkle [16]): “When you show me that result, the computer understands the answer, but I don’t think you understand the answer.”

No circuit designer would or should reason as our students did. Rather, she would apply the meaning of the parallel-resistance formula – that currents prefer low-resistance paths – and see that almost all the current flows through the motor rather than the bottom resistance aRp. Then most of the 10 volts appears across the top resistance, which is much larger than the motor’s few ohms, leaving too little voltage across the motor to turn it on. But this kind of reasoning was not conveyed successfully in our instruction.

| Back to top |

2 Why haven’t we seen this phenomenon as a problem?

A natural question is why haven’t we as a faculty pursued rote symbol manipulation as an existential problem? And why are its depth and implications not more widely appreciated? Here are three possible reasons.

First, the deep misunderstandings are in fundamental and seemingly basic material. So we easily assume that students have mastered it and its real-world connections, and do not probe more deeply. In the modeling process (above), we emphasize the second step, in the math world, implicitly assuming students’ mastery of the first and third steps, making and interpreting the model.

Second, homework problems, which can be longer and probe more deeply than problems on a short in-class exam, are often solved in groups. Thus, we do not measure how much each student in the group understands, or know whether one student has understood the ideas and simply told the rest.

Third, we may fear to know – and rightly so. Grasping the depth of the problem would almost compel us to correct it. But how can we, without transforming our pedagogy and every course, from the GIRs to the most advanced? Individual solutions are too hard. Collectively, however, we might solve this problem, which is the reason for this article.

As another natural question, haven’t researchers in STEM education also identified these problems and shown their solution? We do not believe so. The severity of the problem is hard to grasp, even for the learning-research communities. Thus, standard solutions, such as “active learning” and “student engagement,” do not reach the roots of the problem and are useful more as pointers to the direction to work.

As an example of the depth of engagement that we do seek, here is how one can help students understand, in their gut, the huge forces on the steel marble bouncing off a steel table (above). Take a small rock and rest it on a student volunteer’s hand, which rests on a table. “Does it hurt?” “No? Good, so the force mg on your hand is not too much.” Now raise the rock to about 1 meter and count down to dropping it. As the count nears zero and the student instinctively yanks his or her hand away, play shocked: “But you told me that during the bounce the force is also mg, and mg didn’t hurt. Why are you moving your hand now?” For the many students who now say, “Oh, the force is 2mg,” rest two rocks on their hand, to show that 2mg also does not hurt, and threaten to drop the single rock.

In this kind of engagement, which taps our brains’ narrative, visual, and tactile co-processing, we help students bring forth the correct part of their internal gut model: that the force from the rock must be painfully huge, far larger than mg and 2mg (it’s actually a few thousand mg). Then we can help them to express that gut understanding using force and acceleration and thereby to give meaning to the fundamentals of dynamics.

3 Consequences

But there is a preliminary question. If our students, among the most highly selected in the world, have such difficulties even when taught by world experts, how much of a problem is rote symbol manipulation? For the following reasons, we think that it is a large problem.

First, rote symbol manipulation provides only a shaky foundation of knowledge. Knowledge built on this foundation will be still shakier. What should form a solid foundation for downstream courses becomes a sandcastle base.

Second, rote symbol manipulation makes teaching a downstream course difficult. After grasping this problem, a single instructor is in a dilemma: “Should I return to and rework the upstream knowledge (often from high school) to rebuild a rock-solid foundation, and risk not building the next required floor? Or should I just build the next floor and hope that it stays upright, although it stands on sand?”

Third, shaky learning is ephemeral, making students study longer and sleep less – a recipe for stress. Shaky learning leads to less transfer and less interest and even to burn out, because the limited learning does not compensate for the large stress.

Fourth, when students lack deep understanding, they are forced to ignore their intuition because it is wrong so often. The divorce between intuitive and symbolic models of the world is memorably described by Eric Mazur at Harvard. He had given his students the Force Concept Inventory [7], a diagnostic test of students’ reasoning about force and motion. One student asked him [9, p. 4], “Professor Mazur, how should I answer these questions? According to what you taught us, or by the way I think about these things?”

The consequence for engineers and scientists is, in Middlebrook’s term [11], “falling off a cliff.” Its origin is shown in the contrast between analysis and design.

Much of what we teach in our technical courses is how to go from a system to its behavior or, in science, from a theory to its predictions. This teaching follows the analysis arrow. Yet, as practicing engineers or scientists, our onetime students have to solve the opposite problem. Given a desired behavior, they must find the system. Or, given data, they must find an explanatory model (a theory). This task follows the design arrow.

But when they merely manipulate symbols, their design process degenerates to the following. First, guess a possible system. Second, predict its behavior using a simulation system. Third, compare the predicted and desired behaviors. Until they match, try again by guessing another system.

Any real system has many tunable parameters (such as component values, forces, or material properties). So “knob-twiddling” [12] through the many-dimensional parameter space is lengthy and unreliable. A common workaround is to tweak a pre-existing design. But this method will not find novel solutions and radical improvements.

If we do not want our students to become well-paid knob twiddlers, they should understand analysis deeply, learning what Middlebrook described as “invertible methods of analysis” [11, 12]. An invertible method produces its answer – a system behavior – in an insightful form. We may get the time constant of a resistor–capacitor circuit as τ = ( R1 || R2 || R3)C2 rather than as the mathematically equivalent

![]()

Feynman [5, p. 53] emphasized the difference between mathematical and psychological equivalence: “[P]sychologically [the two forms] are different because they are completely unequivalent when you are trying to guess new laws” – or design a new system. Indeed, only the invertible form shows the engineer what parameters of the system to change in order to move the predicted toward the desired behavior. Rather than simply trying another guess, the engineer explores the parameter space intelligently and needs less luck to find radically new designs and improvements. This approach to analysis joins it tightly to design. Rigorous and invertible methods of analysis are a foundation for principled, creative design and so for engineering and scientific leadership.

4 Final thoughts

The problem of rote symbol manipulation, although identified decades ago, is not solved. Even on the limited evidence offered here, the problem is difficult. Any solution would incorporate many ideas and insights. But is the problem even solvable? We do not know. However, we, as a faculty here and with our colleagues elsewhere, must try to solve it as we answer several essential questions. In particular, what is deep learning and how can we foster it? How should we change not only how we teach (the emphasis of much education research) but rather what we teach and how we organize our disciplines’ ideas and ways of thinking? On the importance of progress on these questions, Jaynes [8] wrote that

the goal [of teaching] should be, not to implant in the students’ mind every fact that the teacher knows now; but rather to implant a way of thinking that enables the student, in the future, to learn in one year what the teacher learned in two years. Only in that way can we continue to advance from one generation to the next. [original emphasis]

To that end, we welcome your questions, comments, and suggestions.

References

- Arnold Arons. Teaching Introductory Physics. Wiley, 1997.

- Peter J. Barham. An analysis of the changes in ability and knowledge of students taking A-level physics and mathematics over a 35 year period. Physics Education, 47(2):162, 2012.

- Yehudit Judy Dori and John Belcher. How does technology-enabled active learning affect undergraduate students’ understanding of electromagnetism concepts? The Journal of the Learning Sciences, 14(2):243–279, 2005.

- Yehudit Judy Dori, John Belcher, Mark Bessette, Michael Danziger, Andrew McKinney and Erin Hult. Technology for active learning. Materials Today, 6(12):44–49, 2003.

- Richard Feynman. The Character of Physical Law. MIT Press, Cambridge, Massachusetts, 1967.

- Richard R. Hake. Interactive-engagement versus traditional methods: A six-thousand-student survey of mechanics test data for introductory physics courses. American Journal of Physics, 66(1):64–74, 1998.

- David Hestenes, Malcolm Wells and Gregg Swackhamer. Force Concept Inventory. The Physics Teacher, 30(3):141-158, 1992.

- Edwin T. Jaynes. A backward look into the future. In W. T. Grandy Jr. and P. W. Milonni, editors, Physics and Probability: Essays in Honor of Edwin T. Jaynes. Cambridge University Press, Cambridge, UK, 1993.

- Eric Mazur. Peer Instruction: A User’s Manual. Prentice Hall, Englewood Cliffs, New Jersey, 1997.

- Lillian C. McDermott and Edward F. Redish. Resource letter: PER-1: Physics education research. American Journal of Physics, 67(9):755-767, 1999.

- R. David Middlebrook. Methods of design-oriented analysis: Low-entropy expressions. In New Approaches to Undergraduate Engineering Education IV, 1992.

- R. David Middlebrook. Low-entropy expressions: The key to design-oriented analysis. In Frontiers in Education Conference, 1991. Twenty-First Annual Conference. Engineering Education in a New World Order. Proceedings, pages 399-403, Purdue University, West Lafayette, Indiana, September 21–24, 1991.

- Frederick Reif. Millikan Lecture 1994: Understanding and teaching important scientific thought processes. American Journal of Physics, 63(1):17-32, 1995.

- Elliot Soloway. Learning to program = learning to construct mechanisms and explanations. Communications of the ACM, 29(9):850-858, 1986.

- Colin Terry and George Jones. Alternative frameworks: Newton’s third law and conceptual change. European Journal of Science Education, 8(3):291-298, 1986.

- Sherry Turkle. Seeing through computers. American Prospect, 8(31):76–82, 1997.

- J. W. Warren. Circular motion. Physics Education, 6(2):74, 1971.

- J. W. Warren. Understanding Force: An Account of Some Aspects of Teaching the Idea of Force in School, College, and University Courses in Engineering, Mathematics and Science. John Murray, London, 1979.

- Carl Wieman. Improving How Universities Teach Science: Lessons from the Science Education Initiative. Harvard University Press, Cambridge, Massachusetts, 2017.

| Back to top | |

| Send your comments |

| home this issue archives editorial board contact us faculty website |